The State of AI Agents in 2026: Beyond the Hype — What 40% Enterprise Adoption Actually Looks Like

40% of enterprise apps may have AI agents by the end of 2026 — but the real divide is between companies running a system and companies running a demo.

The market is arguing hype vs transformation. That framing is too shallow for operators. The practical reality is that integration is accelerating while execution quality is diverging. Gartner’s 40% forecast is a momentum signal, not an automatic value signal.

This pillar gives a contrarian-practical map built for leaders making allocation decisions now.

Table of Contents

- 1) What ‘40% Adoption’ Actually Means (and What It Doesn’t)

- 2) Pattern One — Workflow Fit: Start Narrow, Win Measurably

- 3) Pattern Two — Orchestration Depth: The Real Moat in 2026

- 4) Pattern Three — Governance Throughput: Why Good Controls Increase Speed

- 5) Case Reality Check: JP Morgan, Microsoft, Salesforce, ServiceNow

- 6) Why 60% Stall: The Five Failure Modes Behind Pilot Fatigue

- 7) A Practical Evaluation Framework for Leaders (Use This Quarterly)

- 8) The Next 90 Days: A Contrarian Action Plan

Want operator-grade AI insights weekly? Subscribe to Luiz’s newsletter and follow for practical AI agent playbooks.

1) What ‘40% Adoption’ Actually Means (and What It Doesn’t)

Gartner’s forecast of broad agent integration (source) is frequently misunderstood as broad enterprise transformation. It is not. Integration velocity and operational maturity are different curves.

The practical interpretation: more systems will expose agent capabilities, but only a subset of organizations will convert those capabilities into measurable throughput gains.

Adoption percentage is a technology statistic. Transformation percentage is an operating statistic.

2) Pattern One — Workflow Fit: Start Narrow, Win Measurably

Real value appears first in repetitive, high-volume workflows with clear success criteria. JP Morgan COiN remains a benchmark for this shape of problem (source).

Microsoft Dynamics 365’s data-entry and data-exploration direction reinforces this narrow wedge playbook (source).

- Select one costly bottleneck

- Define baseline metrics

- Deploy with explicit handoffs

- Scale only when threshold performance holds

Read Next → Internal links pending GSC mapping.

3) Pattern Two — Orchestration Depth: The Real Moat in 2026

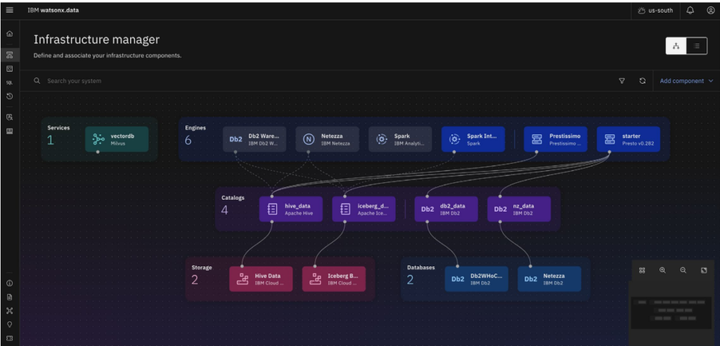

Model quality alone rarely determines enterprise outcomes. Orchestration depth does. That includes context integrity, permission controls, workflow routing, and observability.

Salesforce Agentforce’s enterprise messaging increasingly centers this reality: scaling requires supervision, lifecycle tooling, and consistent controls (source).

The easiest thing to copy is a model endpoint. The hardest thing to copy is operational discipline.

Read Next → Internal links pending GSC mapping.

4) Pattern Three — Governance Throughput: Why Good Controls Increase Speed

Weak governance creates repeated friction. Strong governance creates reusable launch rails. That is why governance-by-design has become a strategic speed lever in serious enterprise programs (source).

When risk tiers, owners, and escalation paths are predefined, deployment cycles shorten and trust rises.

Want the deployment scorecard template? Subscribe and get the practical checklist in the next newsletter issue.

Read Next → Internal links pending GSC mapping.

5) Case Reality Check: JP Morgan, Microsoft, Salesforce, ServiceNow

JP Morgan demonstrates workflow-fit economics. Microsoft demonstrates practical augmentation in CRM flows. Salesforce demonstrates orchestration and supervision maturity themes. ServiceNow demonstrates verticalized service lifecycle execution in telecom (source).

The pattern is consistent: measurable value requires bounded scope and operating controls.

Read Next → Internal links pending GSC mapping.

6) Why 60% Stall: The Five Failure Modes Behind Pilot Fatigue

Most stalled programs share five avoidable issues: vanity use cases, context debt, ownership blur, measurement theater, and adoption neglect.

- Document baseline KPI before launch

- Test fallback and rollback paths

- Assign accountable owner

- Launch observability with the workflow

- Review at 30/60/90 days

Without this discipline, pilot activity accumulates faster than enterprise value.

Read Next → Internal links pending GSC mapping.

7) A Practical Evaluation Framework for Leaders (Use This Quarterly)

Evaluate each candidate with the 3-pattern framework: Workflow Fit, Orchestration Depth, Governance Throughput. Score each from 0–5 and only scale candidates above threshold.

| Pattern | Question | Score |

|---|---|---|

| Workflow Fit | Is value measurable in business terms? | 0-5 |

| Orchestration Depth | Is production supervision reliable? | 0-5 |

| Governance Throughput | Can controls be reused at speed? | 0-5 |

This converts AI prioritization from opinion wars to operating evidence.

Read Next → Internal links pending GSC mapping.

8) The Next 90 Days: A Contrarian Action Plan

Prune weak pilots, instrument strong workflows, and scale only proven candidates. Build institutional memory through clear ownership and repeatable controls.

In 2026, winners are not the loudest adopters. They are the best operators.

FAQ: Enterprise AI Agents in 2026

What does 40% AI agent adoption mean for enterprise strategy?

It means integration is becoming mainstream, but business impact still depends on workflow design and operating discipline. Leaders should treat adoption stats as an early signal, then focus on measurable outcomes and accountable ownership.

What is the best first use case for AI agents?

A repeatable, high-volume workflow with clear KPI baselines and bounded scope. Avoid broad, ambiguous assistants at the beginning. Start narrow, prove value, and then scale with confidence.

Why do enterprise pilots stall?

Common causes include poor use-case selection, weak data context, unclear ownership, and missing observability. Most failures are deployment architecture failures, not purely model failures.

How important is orchestration in AI agent success?

Critical. Orchestration defines how agents interact with enterprise data, systems, and human workflows. Strong orchestration is often the difference between an impressive demo and reliable production value.

Does governance reduce speed?

Poor governance reduces speed the most. Good governance improves speed by standardizing controls, ownership, and approvals, which shortens deployment cycles and increases trust.

Which KPIs should leaders track?

Track cycle time, quality, exception rate, escalation volume, and cost or revenue impact at workflow level. Tie each KPI to a named owner and baseline for accurate attribution.

Get the weekly enterprise AI operator brief. Subscribe to Luiz’s newsletter and follow for practical, benchmark-backed breakdowns across LinkedIn, Instagram, and X.